Let’s make sure that our critical content is not marooned on data islands

The sustainability of digital projects varies widely. Let’s have a look at two examples.

A. Quick-fix, short-term, unsustainable

Typically, commercial web projects are created to fulfil some short-lived need (a few years or less). They are built on proprietary internal structures (e.g. internal databases) and interfaces (both web and API) that can be varied at-will to satisfy immediate demands.

This is often done without too much regard to their long-term viability or their compatibility with other systems. Their continued viability depends on a pattern of constant change and maintenance. These projects are unsustainable, but that’s fine as they are not intended to be — their very unsustainability is what makes them fast to develop, and easy to modify and deploy.

B. Long-term, archival

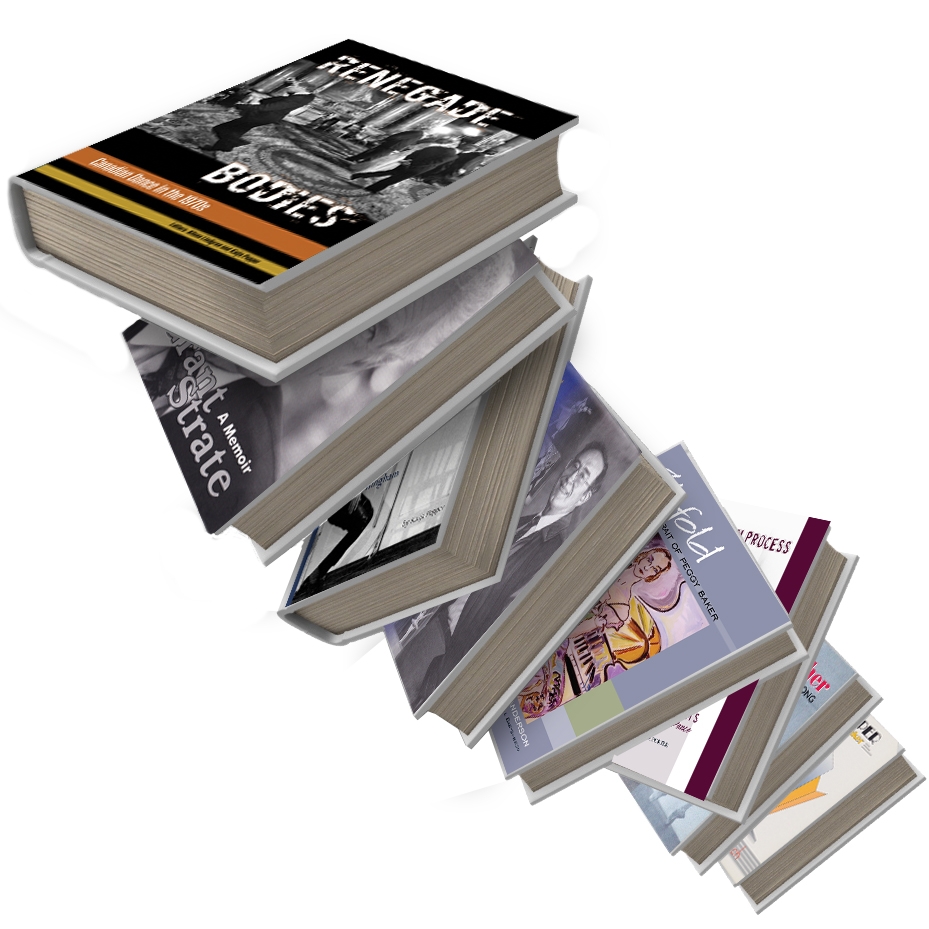

At the other end of the scale are archiving projects intended to preserve data over a much longer-term. For example in museums and libraries where both data and metadata tend to be far richer: the data related to a credit card transaction is much smaller than that relating to a museum artefact.

To build for the long-term, keep it simple

Simpler structures are easier to maintain in the long-term. This needs to be coupled with metadata (data that provides information about other data) in systems that are both standardised yet sufficiently rich and flexible to cope with the enormous variation between records – a museum may hold an image of a poem engraved on a spacecraft as well as a 200-tonne Egyptian obelisk, and both need to be represented in archiving systems. Not easy.

Long-term viability rests squarely on foundations for simplicity, openness, and standardisation.

Anything complicated will require similarly complicated tools to work with it, which in turn require more maintenance, and dependence on technologies that are likely to disappear within the anticipated lifetime of the data — for example, cough, look what happened to CD-ROM.

Open standards

Two key pieces of open standardisation are the Resource Description Framework (RDF) and the Dublin Core Metadata Initiative (DCMI). RDF provides a way of representing data and metadata through the web, defining a generic base on which domain-specific resource models can be built.

A good example of the practical use of RDF is Really Simple Syndication (RSS), which is more widely known as a way of distributing news feeds from websites and is the basis for podcasts.

DCMI is an attempt to standardise (including a related ISO standard) the representation of multimedia assets and metadata across projects and fits particularly well within RDF. For example, it’s entirely reasonable for a site’s news feed (using RSS) to contain the text of news articles and related media defined using DCMI standards, and the whole is ultimately an RDF data structure.

DCMI exists because many archiving projects have much in common, for example, the concept of an author of a book will be largely the same across libraries, and the concept of a location will be common across archaeological projects. Despite the requirement for simplicity, even DCMI suffers from an element of bloat, where every apparently simple property turns into a hierarchy with its own taxonomy and rules – it is a very difficult balance to strike. RDF can be represented in multiple formats, but most often uses XML and JSON.

How can we go about improving the sustainability of digital projects?

RDF provides a structure for defining the overall project, and DCMI provides a core of definitions for the shape of the media and its related metadata, so we essentially don’t need to worry about those things at a fine-grained level, but those definitions are still sufficiently complex that we require tools to interact with the repository.

The tools themselves do not need to be sustainable, and they can be improved or completely replaced over time, applying new technologies as they become available. For example, there are now systems that can use artificial intelligence to generate descriptions and keywords from an image, something that was impossible just a few years ago, but the data resulting from such a system will feed quite nicely into DCMI in RDF.

Openness (as exemplified by web and internet standards) is critical to sustainability.

Companies change tack, go bust, get bought out, have critical people leave, and as a result, their focus and direction can change. This can mean that critical software, systems and processes are abandoned.

The best way to avoid being trapped with orphaned software is to ensure it’s open-source, so if it is abandoned, it can continue to be maintained and developed by others. In order for this to work effectively, you need a certain critical mass of enthusiastic users who individually or collectively are able to keep projects working and useful. In the absence of open source applications, we can at least hope that the applications use open file formats such as XML, JSON, YAML, JPEG, MP4, Matroska, or CSV, and avoiding complex, proprietary formats such as Photoshop and Microsoft Office, so that we can be certain that our critical content is not marooned on data islands.